Webflow + Cloudflare reverse proxy. Why and How

As more companies move to Webflow and the demand for Webflow Enterprise grows, you’ll see more teams leaning on reverse proxies to solve some of Webflow’s infrastructure limitations.

Namely, the need for a single domain that seamlessly hosts multiple projects from multiple platforms, such as Webflow, WordPress, Vercel, and others.

In this article, my goal is to give you an end-to-end understanding of what’s required to launch and manage your own reverse proxy.

How does this look without a reverse proxy?

Let's first imagine the vanilla scenario.

You've built your Webflow site, domain in hand, Webflow hosting paid for and you follow the Webflow instructions to change your DNS settings.

What you're doing is attaching the Webflow hosting environment to the domain.

domain.com = Webflow project

So when a user types in domain.com into their browser and presses search, a request gets sent to domain.com, and domain.com then returns a response with the Webflow project HTML, CSS and JS.

It's a 2-step process.

- Request domain.com

- domain.com returns your Webflow project

Okay, so why can't we just slap 2 Webflow projects on the domain together?

I’m glad you asked.

The short version: the internet only knows how to send traffic for a domain to one destination at a time.

When you connect a domain like domain.com to Webflow, your DNS records point that domain to Webflow’s hosting environment — their CDN, their servers, their IPs. So you can't also connect the same domain to Vercel, Framer, or WordPress hosting environments.

DNS doesn’t support “splitting” traffic between multiple providers. Each hostname (like domain.com or www.domain.com) can only point to one IP address or one platform. It’s a simple lookup table — not a traffic director. You can’t tell DNS to send /blog to WordPress and /app to Vercel. It’ll just send everything to whichever host the record points to.

Each of these platforms — Webflow, Vercel, Framer, WordPress, etc. — is its own hosted environment. They each manage their own infrastructure, serve their own files, and don’t have control over each other’s routing. Once traffic lands at Webflow, Webflow can only serve what’s inside your Webflow project. It can’t decide to forward /blog to another provider.

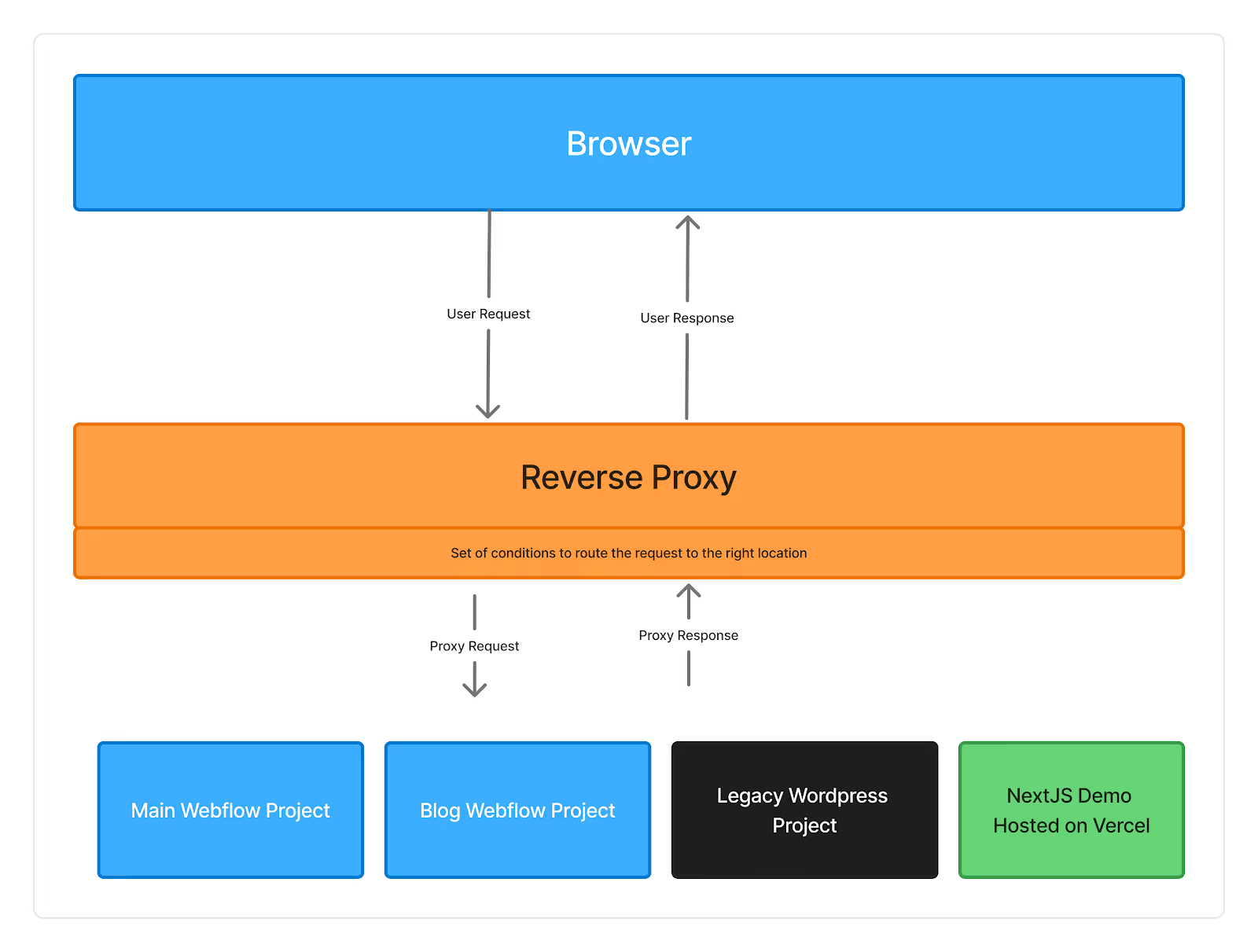

That’s where a reverse proxy comes in. A reverse proxy sits in front of all your projects and acts as the traffic director DNS can’t be. It looks at each incoming request and decides where to send it based on the URL path. For example:

domain.com → Webflow

domain.com/blog → Webflow proj #2

domain.com/app → Vercel

From the user’s perspective, everything still lives under one clean domain.

What even is a reverse proxy?

A reverse proxy (RP) is a small JavaScript function that is attached to your primary domain. It runs every time a browser requests your website.

Think of it as a router with logic.

When a user puts your site in their browser and presses search:

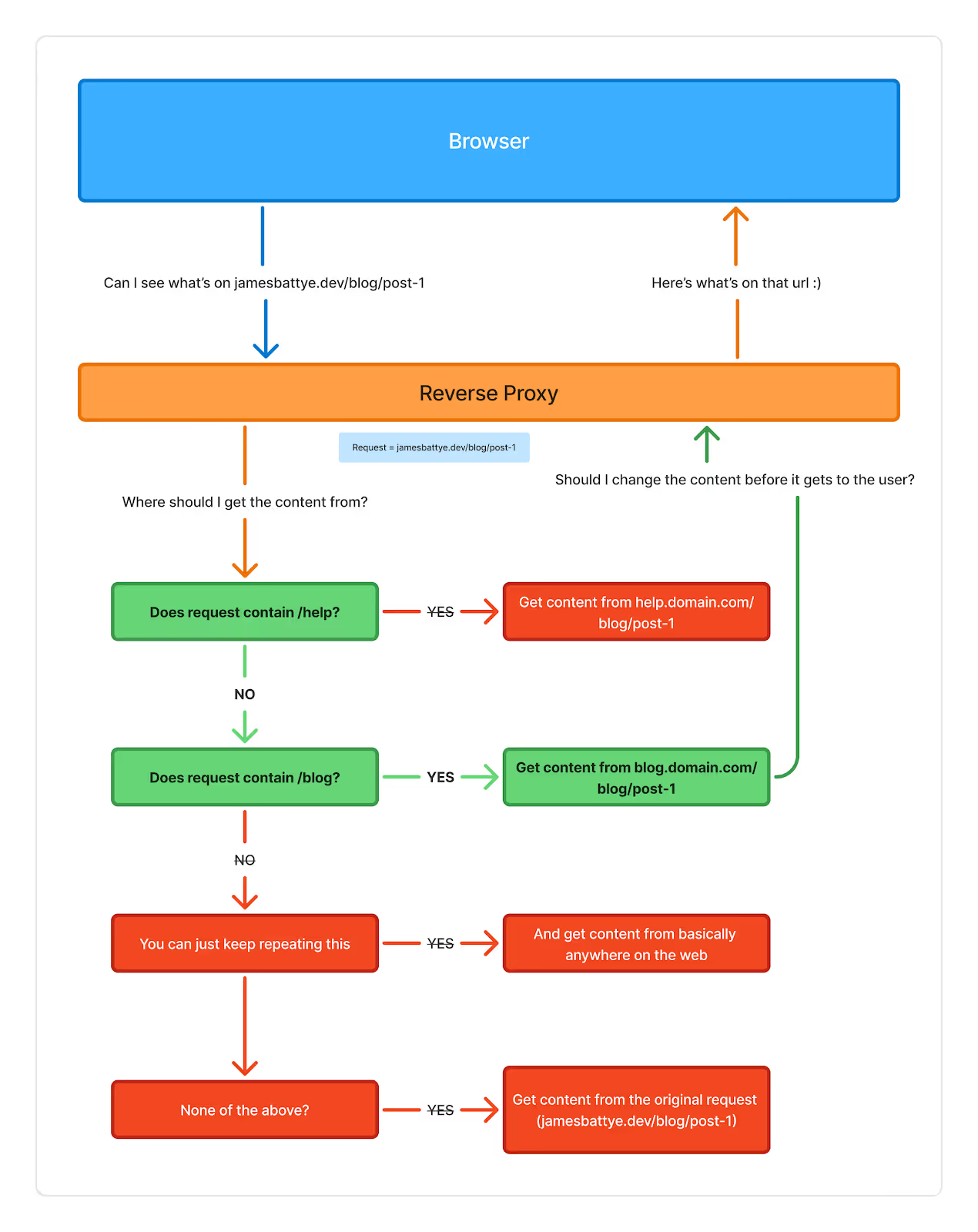

- The reverse proxy receives the request

- The reverse proxy code passes the request through a series of conditions (if/else statements)

- Is the request asking for the blog? (i.e. does the request url have /blog)

- YES: Fetch the page from the Webflow project hosted on blog.domain.com

- Webflow the request asking for the help center? (i.e. does the request url have /help)

- YES: Fetch the page from the Webflow project hosted on help.domain.com

- Is the request asking for none of the above?

- YES: Fetch the page from the Webflow project hosted on wf.domain.com

- Is the request asking for the blog? (i.e. does the request url have /blog)

- Once the request is recieved, the reverse proxy code has an opportunity to manipulate the response (I.e. add new HTML, make fetch requests and add API data in the HTML so crawlers can see it)

- The reverse proxy returns the response to the user.

The beauty of this setup lies in its flexibility.

You can host multiple Webflow projects, CDNs, and even external apps. The reverse proxy stitches them all together under one domain. It’s invisible to the user but incredibly powerful for developers, especially in enterprise or multi-site builds.

What can we do with a reverse proxy?

1. We can attach any web-hosted content to our main domain.

Here’s a non-exhaustive list of possibilities:

- Host multiple Webflow projects under one domain

- Migrate key pages of an enterprise site to Webflow and leave the rest on legacy systems for later phases

- Rebuild or replace a poorly structured Webflow project with minimal risk or downtime

- Host custom functionality such as a Next.js, React, or Vite app (for example, a demo or calculator)

- Combine Webflow and WordPress projects under one domain

In short, a reverse proxy provides the freedom to mix and match platforms while maintaining a cohesive and consistent experience for your users.

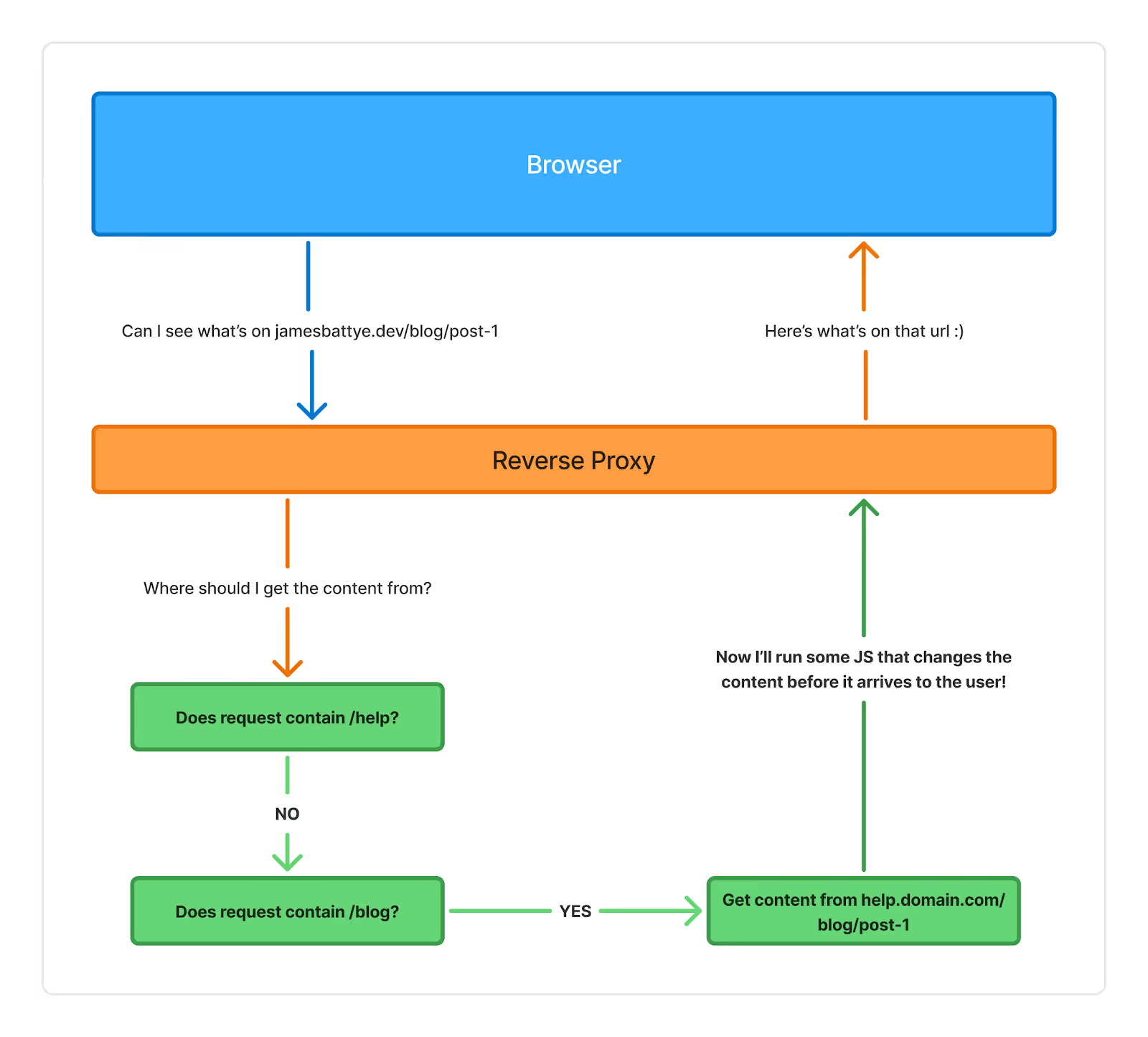

2. We can run JavaScript before the user (or web crawlers) get the page

TLDR: Crawlers typically don't render any JS. so if you're fetching data or doing calculations that you want Google and ChatGPT to see, then you need to make sure its happening before they get it.

Currently, it's pretty hit-or-miss whether crawlers render your client-side JS.

Google might render JS... sometimes... I think.

Even when it does render it, it can still be a net negative.

Here's a snippet from this post about how Google prioritises non-JS rendering.

"Googlebot has separate queues for regular crawling and rendering. It does a first pass to grab the server supplied HTML then it comes back later to do the rendering. Google made some announcements that typical delay between first crawl and rendering is now down to seconds. Despite that, websites that require rendering often seem to lag in indexing by days or weeks compared to pages that don't need to be rendered. See Rendering Queue: Google Needs 9X More Time To Crawl JS Than HTML | Onely"

Not to mention, only Google and Bing render JS. Any other browsers, LLMs, and similar tools will not render your JavaScript.

Our reverse proxy allows us to manipulate the response from Webflow before the user (or crawler) sees it.

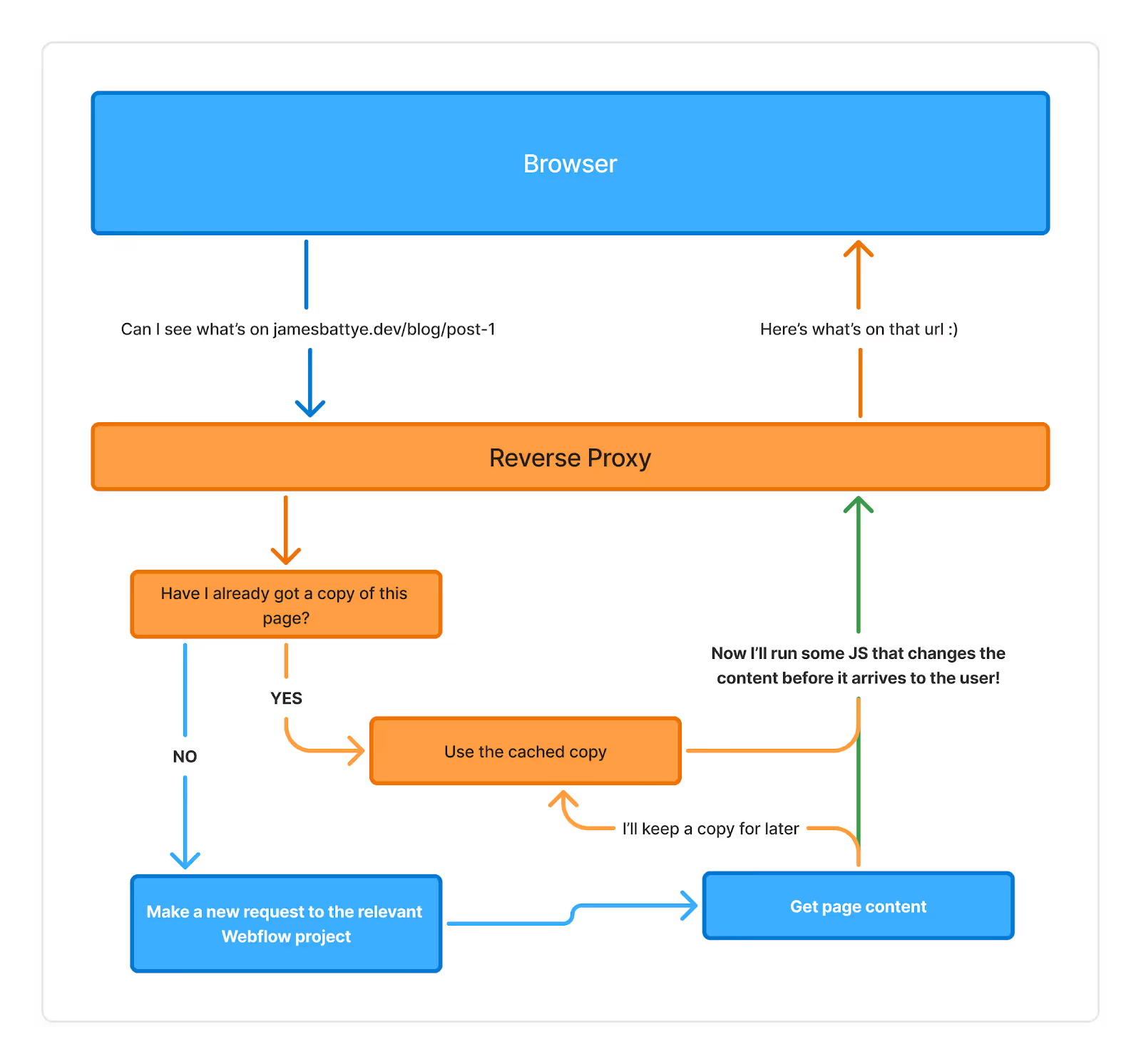

3. Reduce Webflow Bandwidth

Because your Cloudflare RP is taking all the requests on behalf of Webflow, you get the benefits of Cloudflare's fantastic caching, which means that most requests are returned instantly without ever having to query Webflow.

How to Manage a Reverse Proxy (Cloudflare, Wrangler, GitHub Actions)

Now that we understand what a reverse proxy is, let’s look at how this might work in a professional setup.

There are really two main ingredients here.

Github & Github Actions

All your source code lives in GitHub. It acts as your single source of truth, as per industry standard.

Developers contribute through a standard GitHub workflow, which includes branches, pull requests, and reviews. That process itself is outside the scope of this article. I’ll cover it in more detail another day.

For now, what matters is that our code is safe and secure on GitHub and we can use GitHub Actions.

GitHub Actions handle the automation, bundling, minification, and optimisation of your code, then send it to Cloudflare for deployment.

Cloudflare & Wrangler

Once your code is built and ready, Cloudflare takes over.

Your reverse proxy runs on Cloudflare Workers, lightweight JavaScript functions that execute on Cloudflare’s global edge network. This means your routing logic, caching, and transformations occur closer to your users, resulting in faster performance and lower latency.

I use Wrangler, Cloudflare’s command-line tool, to manage and deploy these Workers.

When paired with GitHub Actions, I can automate my entire workflow from code changes to live deployment with version control built in.

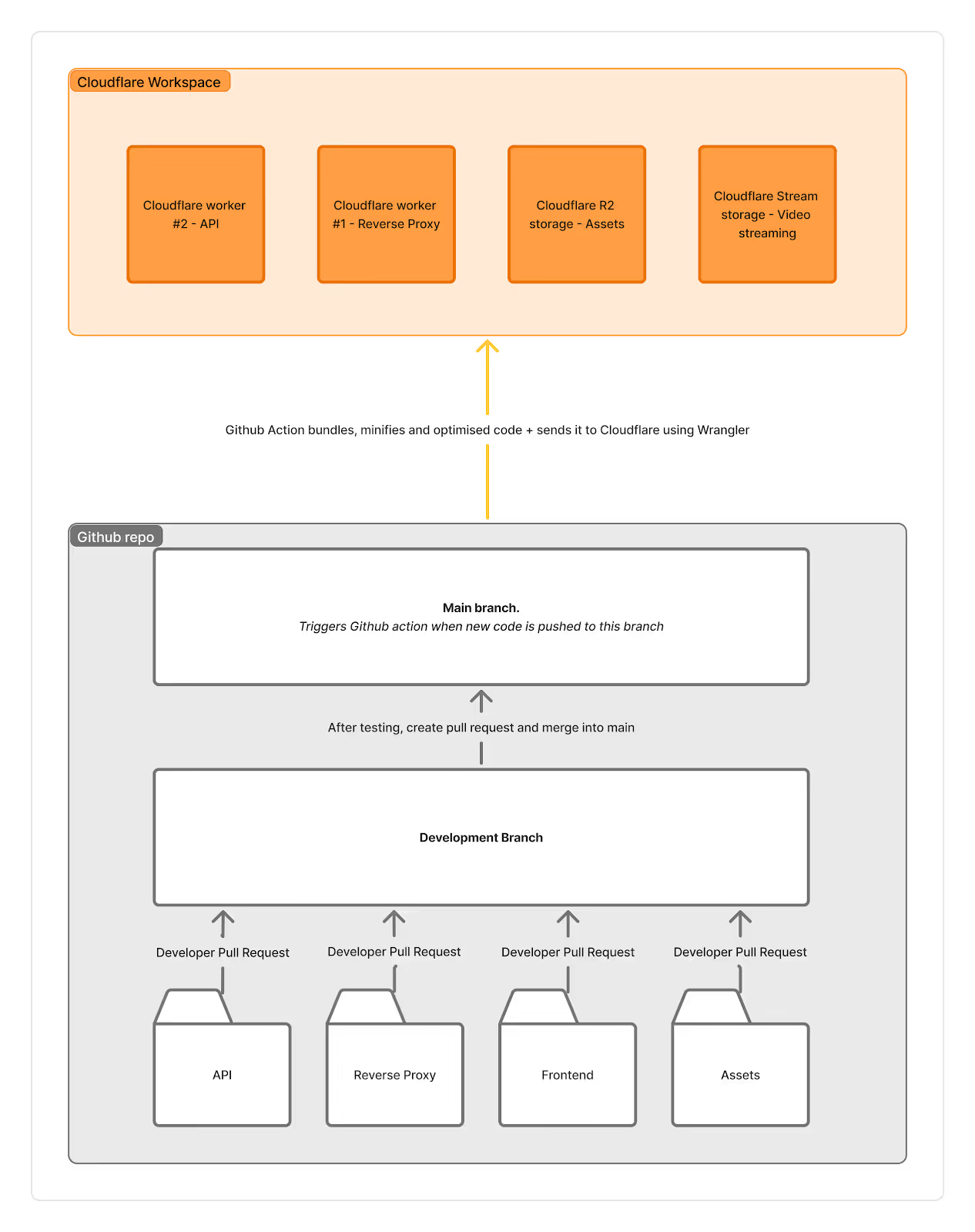

In the diagram below, you can see the workflow play out.

We have one GitHub repository that contains folders for our API, Reverse Proxy, Frontend code (including GSAP, animations, and client-side JavaScript), and assets (videos, 3D models, textures, etc.).

That repository has two branches: Dev and Main.

When a new update is pushed to the main branch, it triggers the GitHub action.

The GitHub action then bundles all your code to be hyper-efficient and ships it to Cloudflare.

Cloudflare then updates our workers and storage.

In the diagram, you can see we have a worker for the API and a worker for the reverse proxy. Why not have both as 1?

Because we need them on different domains and to fire at other times.

The reverse proxy fires every single time. No exception.

The api endpoints are only fired when you request those specific URLs.

We also have some R2 storage (which is just a cdn) for our static files, like 3d models, videos or images we need consistent URLs for.

Additionally, we can also add Cloudflare streaming if you prefer a more efficient video experience.

Put it all together, and you’ve got an enterprise-grade JavaScript and reverse-proxy pipeline that actually performs. Clean, scalable, and fast. That’s the goal.

I built a VSCode extension

Webflow Project Syncer brings autocomplete for your Webflow CSS classes and data attributes directly into VSCode.

Introducing Swiperflow

Attribute based swiper library. Create custom, CMS & Static sliders with just attributes.

Managing UI State in Webflow with JavaScript

A concise introduction to front-end reactivity, showing why data should control the UI and how to create and watch reactive variables using Vue’s reactivity system, with practical examples.

Using HonoJS with your reverse proxy

Hono.js replaces messy if/else chains with clean, readable routing to build a reverse proxy that scales

Reverse Proxy First Steps

In the next ~30 minutes, you'll have two different sites running under one URL.

Webflow Reverse Proxy Overview: What It Is, Why It Matters, and When to Use It

Reverse proxies are the gateway drug to Webflow Enterprise. Almost every enterprise project I’ve been involved in has implemented it in some form.

Building a Webflow to Algolia Sync with Cloudflare Workers

Build an automated sync between Webflow's CMS and Algolia's search service using Cloudflare Workers.

Using Videos Effectively in Webflow (Without Losing Your Mind)

If you’ve ever used Webflow’s native background video component and thought “damn, that looks rough” I'm here for you.

How (and why) to add keyboard shortcuts to your Webflow site

A small keyboard shortcut can make a marketing site feel faster, more intentional, and “app-like” with almost no extra design or development

Useful GSAP utilities

A practical, code-heavy dive into GSAP’s utility functions—keyframes, pipe, clamp, normalize, and interpolate—and why they’re so much more than just shortcuts for animation math.

Using Functions as Property Values in GSAP (And Why You Probably Should)

GSAP lets you pass _functions_ as property values. I've known this for a while but never really explored it particularly deeply. Over the last couple of weeks I've been testing, experimenting and getting creative with it to deepen my understanding.

Organising JavaScript in Webflow: Exploring Scalable Patterns

Exploring ways to keep JavaScript modular and maintainable in Webflow — from Slater to GitHub to a custom window.functions pattern. A look at what’s worked (and what hasn’t) while building more scalable websites.